- The SAFE Leader Insights by Mark McBride-Wright

- Posts

- The Secret Sauce of High-Reliability Organisations

The Secret Sauce of High-Reliability Organisations

What DEKRA Taught Me About Culture, Controls, and Catching Problems Before They Explode

One standout session for me came from DEKRA’s Rajni Walia and Mike Snyder, two professionals who brought both sharp analysis and heart to the stage. Their joint talk explored the elusive ingredients of “high reliability” in complex, high-risk systems, and what’s really required to get there.

This wasn’t a textbook safety lecture. It was a deep dive into cultural blind spots, decision fatigue, early warning signs, and how psychological safety sits at the heart of sustainable performance.

Here are five reflections I took away, some reaffirming what I already believe, others pushing my thinking forward.

Structure of DEKRA’s talk.

1. It’s Not About Being Perfect. It’s About Being Proactive.

Mike Snyder opened by acknowledging what many of us already know but rarely say aloud: most systems function well most of the time.

Most of the research... looked retrospectively... wouldn’t it be novel if we could take those types of insights and deal with them on a proactive before-the-incident-occurs basis?

What separates high-reliability organisations is their refusal to rely on hindsight. Instead, they develop the foresight to act before the fault lines crack wide open. The organisations that get this right don’t wait for disaster, they anticipate and adapt.

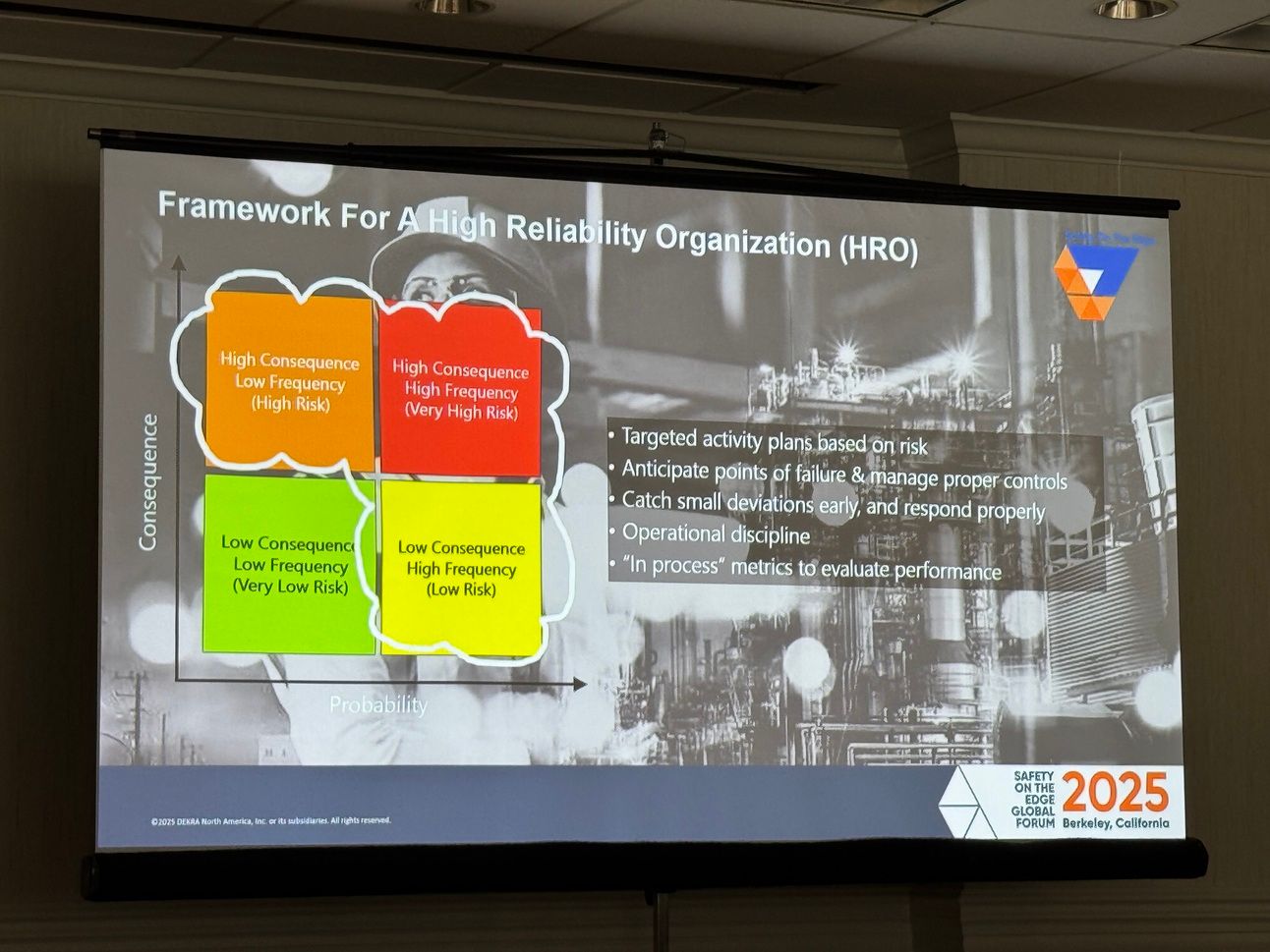

These organisations seem to have a dynamic way in which they focus their resources aligned with the risks that the organisation is exposed to.

2. You Can’t Manage What You Don’t Understand

One of the most grounded insights was around risk visibility. Mike described how too many leaders still operate with uncalibrated risk data, or worse, with blind spots.

Even executives have blind spots about areas within their organisation where risk doesn’t come in, or it doesn’t come in in a calibrated way.

This is where safety performance starts, not with TRIR, but with asking: what risks actually exist in our system? And how well are our controls truly performing?

Safety is not the absence of accidents, but the presence and the quality of the controls.

3. Designing for Human Error

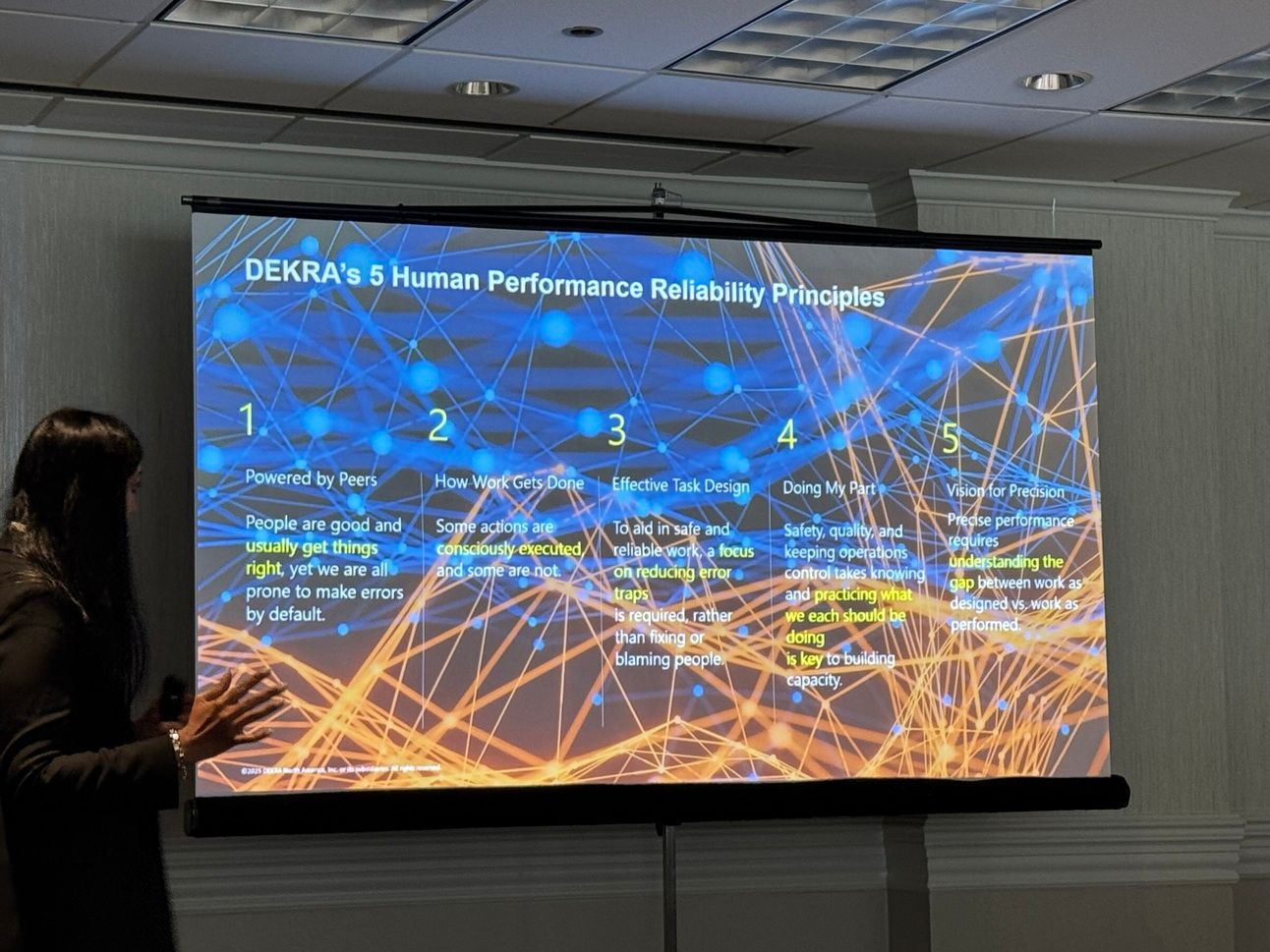

Rajni Walia brought the neuroscience into sharp focus. Her message was clear: humans are wired to make errors. The answer isn’t to punish that, it’s to design for it.

When people come to work, they don’t want to mess up... but yet we know that despite our best efforts, we’re going to make error.

Their framework isn’t about compliance. It’s about building systems that account for human limitations and empower individuals to respond better in real time.

We want to design work to minimise those error traps, to minimise those sensitivities.

She also offered a respectful challenge to the HOP orthodoxy, saying:

Yes, an organisation has a responsibility to design perfect systems... but individuals also have a responsibility to keep themselves safe.

That’s a powerful rebalancing, between system accountability and individual agency.

4. Psychological Safety Is the Gateway Drug to Vigilance

Mike shared that across 100+ catastrophic incident investigations, every single one had weak signals beforehand.

In every catastrophic incident I’ve investigated, there have been dozens, and in some cases, hundreds of early warning signs.

The question is not whether those signs existed. The question is: were they heard? And if they were heard, were they acted on?

This is where culture becomes safety’s multiplier effect.

The culture of the organisation will give you... a competitive advantage in where these issues can be raised and how they can be properly managed.

And just as crucially: leaders must close the loop.

Leaders are always afraid to say, ‘You’ve told me something, we’ve looked into it, and I can’t act on it.’ That’s okay. The fact that you close the loop will get you the investment for the next time.

5. Learning Isn’t Optional. It’s Organisational Oxygen.

Too often, we gloss over incidents with “lessons learned” presentations and compliance box-ticking. But real learning? That takes vulnerability.

If we don’t truly and accurately reflect where we’ve had deviations and share that, we’re going to repeat them. We’re going to repeat them again.

Mike was clear-eyed about the blockers, yes, legal teams can be a barrier. But that doesn’t mean we give up. He cited trusted resources like the CCPS Process Safety Beacon and EPSC’s process safety sheets as practical starting points for storytelling.

There are ways to share information... that address tastefully the legal concerns that are there.

In DEKRA’s world, learning is embedded, shared, and measured. It’s not a one-off, it’s how the culture breathes.

Final Thought: The Oreo Metaphor

Mike used a metaphor I loved: high reliability is like an Oreo.

The top layer: inventory your risks.

The bottom layer: track metrics that matter.

The filling? Culture.

Those cultural attributes... if they are performing well at high reliability levels, you have a pretty interesting way that you can manage the risks.

When culture holds the system together, safety becomes more than compliance, it becomes resilience.

What This Means for My Work

This session reaffirmed something I hold close in The SAFE Leader:

Paper safety isn’t enough.

We must move from tick-box processes to truth-telling cultures.

Leaders need better radar, and more courage to act on what it tells them.

If we want safer systems, we need safer conversations. And we need to treat psychological safety as the primary control measure, not the afterthought.

I’d love to hear your reflections. What does “failing safely” look like in your world? And what would it take for your organisation to hear signals before they become sirens?

See Mark in Action!

Curious about Mark McBride-Wright’s journey as a speaker and DEI leader? Watch his speaker reel and discover how he’s transforming industries through safe leadership and inclusion. |